GreenDart Inc. Team Blog

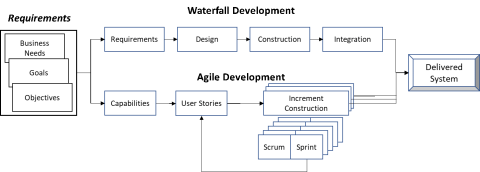

GreenDart has been a contributor to the IEEE-1012 Verification and Validation standard since 2012. For the past years we have strongly promoted the addition of an Agile V&V annex to that standard. Good news – if all goes well the next release of the standard will have an Agile V&V annex. GreenDart, along with a select group of IEEE-1012 members, is authoring that annex. The following figure contrasts traditional Waterfall and Agile system development methodologies, and is expected to be included in the upcoming IEEE-1012 release:

Agile V&V shares many strategies with the traditional V&V method. However, the development tempo of Agile, and the artifacts created during that process, require a different approach to achieve the desired V&V outcomes. As an example, the Sprint V&V process focuses on the pre-Sprint artifacts, to include analysis and assessments of the defined User Stories, the alignment with current development Product Backlog (PBL) and the metrics used to drive both the User Stories and PBL strategies.

GreenDart has significant experience performing Agile V&V for the most critical systems. We are experts in the Agile life cycle development strategy and have received awards for the planning, execution and reporting of complex Agile V&V efforts. How can we help you?

If you liked this post, please rate it below using the star icons!

Author: Bedjanian

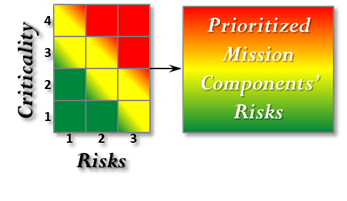

To maximize the V&V initiative impact the V&V team benefits from a process that focuses precious resources towards the most important challenges of the system development effort. The Criticality Analysis and Risk Assessment (CARA) methodology is a useful approach to identify those challenges, including for enterprise V&V assessments of program priorities and execution budget. Specifically, the CARA method is used to map key enterprise challenges and risk to targeted V&V activities. Once the candidate and prioritized list of verification activities are identified, cost estimates are developed for each verification activity. Budget considerations define the end of the V&V tasking list. The results are provided to the Management team for final approval and coordination with the customer, development community, stakeholders, and external users.

The key to driving V&V activities on risk and program priorities is the application of the CARA process. Specifically, for targeted priority areas, the criticality of each function/requirement is defined by assessing performance, operations, cost, and schedule implications for each function. Those assessments are then rated from Catastrophic to Low. All of these assessments are quantifiable and repeatable, ensuring consistent ratings/rankings across the enterprise.

In parallel the risk of each function/requirement is defined for complexity of the design, maturity of the applied technology, stability of the underlying requirements, testability of the function, and the developer’s experience in this particular functional area. As with the criticality assessment, these risk assessments are very quantifiable and repeatable, ensuring consistent ratings/rankings across the enterprise.

Finally, we multiply the criticality and risk ratings to develop the CARA score. The CARA score defines both the priority of the function/requirements and the associated verification tasking required to successfully assess the requirements verification activities of the selected function/requirements. Higher CARA scores drive more robust assessment activities.

GreenDart has specific lists of tasking for each of the Low, Medium, and High CARA scores that cross the development lifecycle (requirement development through sustainment). We are prepared to apply the CARA process as part of our V&V offering. How can we help enable your V&V program success within your limited resources?

The transition from system development into operations, and the subsequent system maintenance and sustainment activities, present organizations with many challenges. V&V addresses these challenges and reduces the potential that anomalies will arise.

During the transition phase the V&V team reviews the transition and activation products, ensuring they meet system needs. Developers are responsible for developing the transition and activation products needed for deploying the system. The products include the preparation of the system for each user site, manuals for each user site, source files, system descriptions for the maintenance site, as-built system data for the maintenance site, and transition plans for transitioning to the maintenance site.

During sustainment, both the cost and risk of defect correction and system upgrades is high. Drivers for such modifications include hardware and/or operating system changes, user errors occurring during operations, other software enhancements, and problems with or replacement of components developed outside of the development plan.

During this phase, V&V performs an assessment of the developer products, which supports the transition of the modifications into the operational phase. Proposed modifications are analyzed to ensure that the changes to be made are correct and do not interfere with other parts of the system or jeopardize the mission. The V&V staff independently assesses such potential modifications and are tasked with identifying risks of introducing new errors into the system and/or other options to achieve the needed changes. These V&V assessments avoid costly rework.

GreenDart has experience performing all levels of Transition, Sustainment, and Maintenance V&V. How can we help you?

Most system test efforts focus on achieving stated program requirements. However, frequently, critical systems require a level of capability validation that goes beyond the developer product assessment activities. For these critical systems, the V&V effort expands to include the development and application of an independent test environment, used to isolate and separately execute the targeted critical software. The independent team is completely segregated from the developer test efforts and must not be compromised by any of the following nominal developer/IV&V -engagement tendencies:

- Assessments of developer test products and/or test strategies

- Use of any developer test hardware and/or software simulators

A major focus of independent testing is to prove the robustness of the software. Initially, the V&V team generates a test plan based on potential risks in the software from the V&V risk and hazards analysis. The plan identifies the effort required to support an independent testing activity. The V&V team develops test cases that stress software requirements at their limits (i.e., throughput, boundary conditions, etc.) to verify that such cases are dynamically handled properly by the deliverable software. After the plan and test cases are approved, the V&V team executes the testing and analyzes the results. The final report includes evidence of the software performance during this testing exposing any defects, risks, or limitations.

Because of the isolated nature of independent testing, unique test environments are required to achieve the assessment objectives. Therefore, the independent test team comprises of both test and software team members.

GreenDart has successful experience developing and executing independent test efforts. How can we help you?

System development typically concludes with a series of test events that culminate in the delivery of the desired capabilities. The test events start with the system element integration as pieces of the end-system are brought together. The corresponding test activity is called Integration Test. Once all the system elements are integrated, System Test is performed on the complete system. Finally, once all the System Test objectives are met then System Acceptance Test is performed to assure the final deliverable capability meets all customer requirements.

Correspondingly, there are Verification and Validation (V&V) activities that assure successful Integration, System, and Acceptance testing. These V&V activities follow a fairly similar activity of assessing test plans, procedures, and, once completed, test results against planned outcomes. This step is critical to assure the quality, completeness and accuracy of the developer’s test efforts.

INTEGRATION AND SYSTEM TEST V&V

During this phase, the developer integrates and tests the integrated system components which could include hardware components and reuse code. Integration testing confirms functional requirements compliance after the software sub-elements are integrated and directs attention to internal software interfaces and external hardware and operator interfaces. Subsequent system testing validates the entire program against system requirements and performance objectives.

V&V assesses the sufficiency and completeness of the developer's integration and system test program, identifying weaknesses, and focusing needed V&V test assessments accordingly. Previous validation activities have prepared this phase for developer software integration and incremental system testing.

V&V also assesses changes to the following developer products: system development and design documents, interface control documents, source code, software executables, test plans and procedures, test results and other products as described in the developer's Test Plan. As the developer's integration and system testing proceed, the V&V team carefully monitors critical testing activities and validates any changes that are made to the code or documentation.

So, we’ve completed the first two critical steps of the overall V&V effort, Requirements V&V and Design V&V. Through those two sequential V&V efforts we have identified errors early, which has saved on the total cost of developing the system (early error discoveries are less expensive to fix than later error detections). But, by far, the Code V&V portion of the V&V effort offers significant variations in V&V investment that deliver different ROI results. In this blog we explore the various code V&V strategies, their impact on program cost, and their impact on the target program’s development cost and schedule.

Code V&V efforts range from an oversight activity up to the actual execution of developed code within a V&V simulated environment. The former code V&V strategy is relatively inexpensive and is consistent with the effort put into requirements and design V&V efforts. The latter is significantly more expensive, as will be seen below, and is performed for those systems where critical mission performance challenges are identified early without impact to the actual target system. A good example of target systems for detailed code V&V are mission critical systems that, if they fail, can cause significant loss of life. So, the investment in detailed code V&V creates a Return On Investment (ROI) that is measured in both dollars and safety/performance considerations.

OVERSIGHT CODE V&V

For oversight code V&V, the V&V engineer reviews code artifacts (flat files, tool-enhanced code representations, etc.) and performs trace analysis to design specifications to ensure what was planned to be developed is actually what is developed. This top-level assessment is critical to maintain traceability, assuring delivered code addresses all of the program requirements. However, this effort does not address the actual performance attributes of the developed code.

A team lead by GreenDart has been awarded a $300M/7yr DHS Blanket Purchase Agreement contract. Under this contract GreenDart provides Test and Evaluation/Cybersecurity Resilience services across all DHS components including Customs and Border Protection, US Coast Guard, Transportation Security Agency, US Citizenship and Immigration Services, and Cybersecurity and Infrastructure Security Agency. Domains include Detection, Information Technology, Watercraft, Aviation, Vehicles, Unmanned Aerial Systems (UAS), and Counter-UAS Systems.

With this BPA prime contract, the GreenDart team can be expected to perform ITA services including Developmental and Operational Test and Evaluation (DT&E and OT&E) planning, execution, and reporting for DHS major acquisition programs. This also includes complex tests and assessments of various IT and non-IT system technologies into the DHS operational domain, and spans all DHS mission functional areas. GreenDart’s efforts also include cybersecurity and cyber resilience assessments. The technologies and systems that are approved through this BPA include Information Technology, Cyber Resilience, Technology Systems, Watercraft, Aviation, Unmanned Aerial Systems (UAS), Counter UAS, Vehicles, and Detection Systems. This DHS ITA BPA has a 7 year ordering period with a total estimated value of up to $300,000,000. Work is to be performed in the Washington, D.C. metro area as well as across the continental United States.

The ordering period for this contract is May 2021-May 2028.

The next stage of overall V&V effort is Design V&V. Once the initial V&V investment is made during the requirements development phase, that investment pays off during the design phase V&V effort. Specifically, the foundational V&V priorities identified in the requirements phase become design V&V decision drivers, leading to desired assessments of critical system capabilities.

System design transforms the developer's system requirements into discrete items that implement those requirements. The outputs of the system design phase are used to support the detailed design activity. Detailed design lays out the specific system units comprising each item including a unit's interfaces with other system units and each unit's internal structure, processes and data needs.

V&V is conducted during system design and detailed design phases. Assessed developer products include: System Requirements Specification, Interface Requirements Specification, System Design Document, Interface Design Document, System Test Plan, System Test Description (STD), and System Architecture Description (SAD). V&V assesses these developer artifacts and those presented during program reviews.

Design phase V&V solidifies the preliminary and final design specifications, and performs risk and security evaluations. Validating the design artifacts ensures that the coding and the unit testing which follow are secured by a sound design traceable to the driving requirements.

Developer errors found in the design phase of the effort by V&V have a significant impact on total system development costs. So, design V&V is a critical next step in the overall V&V process and has the potential to deliver excellent return on the V&V investment.

OVERVIEW

Given the current COVID-19 environment and the pressure that puts on the mobility of test teams to complete Operational Test and Evaluation requirements on key programs, much more emphasis is now placed on integrating various aspects of remote testing into test event planning, execution and reporting. The three main test planning, execution and reporting efforts augmented by a more aggressive remote testing requirement are: pre-event information capture from non-traditional operational test sources; operational observation; event execution data collection and in-process event early assessments coordination.

PRE-EVENT DATA/INFORMATION/CAPABILITY CAPTURE

Techniques: Developmental test results analysis; customer, contractor, user community coordination for suitable data sources.

Tools: Developer/community test environments.

The science of analyzing raw data in order to make insightful conclusions about information; this is Data Analytics. A vast array of techniques and processes innately performed by data analysts have been automated into algorithms that digest and distill raw data into meaningful responses. This evaluation of data analysis is a critical foundation in a world dominated by the availability and manipulation of data. Each day we utilize our smartphones, smart TV’s, smart cars, and smart home devices in the Internet of Things. In our everyday utilization we provide these devices with an immense amount of data on us as people and our market behaviors. We create patterns and natural rule sets that these systems process and ultimately and iteratively learn from. The world of data analytics is an ever-evolving space for awaiting the perfect answer to the questions yet asked.

APPROACH

GreenDart operates at the forefront of industry by maximizing involvement in the world of data analytics. GreenDart is instrumental in advancing a law enforcement led program of creating a single data set of unified law enforcement data, giving analysts a single platform to develop and train more effective algorithms. This platform ingests multiple sources and allow analysts to create patterns and rule sets to identify outliers for further inspection. Once initial digestion is complete, the computer learns new patterns based on these initial algorithms, optimally resulting in predictive identification and rule tailoring. GreenDart is essential in addressing the gaps between requirements and reaching the solution expected by the customer. Great emphasis is put on the ability of the computer to learn new patterns with minimal input from the analyst.

Cybersecurity, cyber resilience, operational resilience. Once we think we have grasped the inputs, outputs, expectations, and requirements of one word, industry shifts and new terminology arises. The conversation is one of nuance, encumbered by terminology and boundary differences. These terms are fairly new and easily misused and misunderstood. For all intents and purposes within the IT space, Cyber Resilience is our term of choice. Cyber Resilience refers to an entity’s ability to withstand and recover from a cyber event. It is measurable in regards to the operational evaluation of an entity or system.

The key question Cyber Resilience addresses is:

How protected and resilient are the internal system attributes (applications, data, controls, etc.) assuming the threat has already penetrated the external cybersecurity protections?

For very high value or otherwise critical development efforts, a customer may elect to procure an independent assessment of the system developer’s T&E program. This effort is typically known as Test Program Verification or simply Test Verification. The Test Verification agent may be brought in by the developer (but kept distinct from the developer’s T&E organization), or the agent may be directly hired by the Government customer, to achieve a higher degree of independence.

Since the intent is to drive rigorous developmental T&E effort, many of the same activities and issues discussed in our DT&E write-up are relevant to this effort, although the perspective is different (e.g., the Test Verification agent does not plan or execute tests). Although both the Test Verification agent and OT&E agent may both be members of an Integrated Test Team, their mutual interaction is apt to limited.

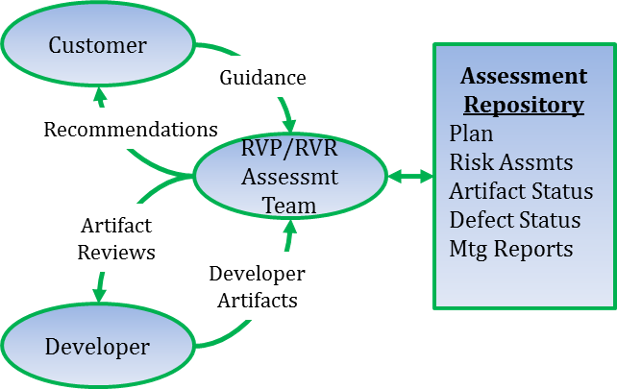

Test Verification involves many of the Test Verification steps defined in GreenDart’s Verification and Validation – Test description. However, the target for this effort is to review and assess the developer’s Requirements Verification Plan (RVP) and their Requirements Verification Report (RVR). Successful assessment of these developer products is critical to achieve customer confidence in the developer’s test program and, therefore, confidence in the successful delivery of the desired system. The figure below shows a notional Test Verification process flow.

RVP/RVR Assessment Process Flow

The key test optimization opportunity of an effective T&E effort is the design and execution of Design of Experiments (DOE). DOE is a systematic method to determine the relation between inputs or factors affecting a process or system and the output of that system. The system under test may be a process, a machine, a natural biological system or many other dynamic entities. This discussion concerns use of DOE for testing a software intensive system (a standalone program, or integrated hardware and software).

Test planners have a range of software test strategies and techniques to choose from in developing a detailed test plan. The choices made will depend on the integration level (i.e., unit test to system of systems T&E) of the target test article, as well as the specified and generated test requirements. Typically, the complete test plan will involve a combination of these techniques. Most of them are commonly known, but applying DOE for testing software intensive systems may not be as familiar. In this context, the design in “DOE” is a devised collection of test cases (experimental runs) selected to efficiently answer one or more questions about the system under test. This test case collection may comprise a complete software test plan, or a component of that plan.

DOE can save significant test time within the overall DT&E and/or OT&E efforts. In one particular instance GreenDart achieved 66% DT&E schedule savings through the successful application of DOE.

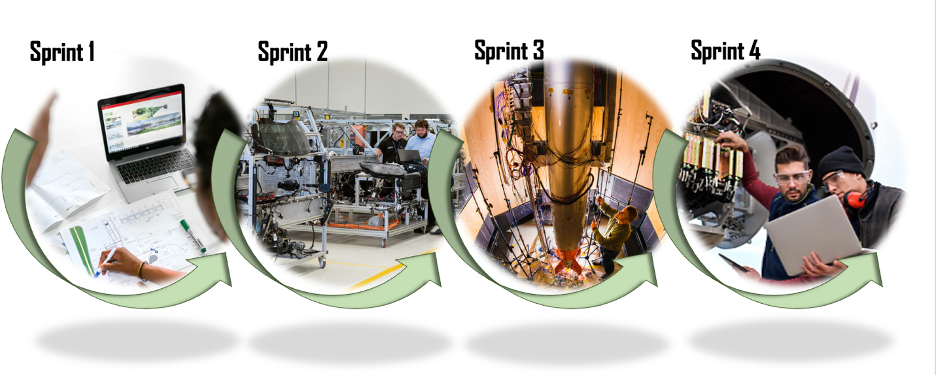

Agile system development has emerged as an alternative to the long-standing “waterfall” software development process. Agile development involves the rapid development of incremental system capabilities. These incremental development activities are called “sprints”. At the start the program selects requirements from the overall system requirements specification, builds user stories around those requirements, and allocates those user stories to specific sprint events. During each sprint event the developers go through a mini-waterfall effort of requirements, design, code, and test of a very small segment of the overall system. Once the sprint is complete the resultant product is typically integrated into the evolving overall system. Any unfulfilled sprint requirements go into a requirements holding ledger called the Product Backlog (PBK) for reassignment to future sprints. Sprint re-planning occurs, as needed, based on the accomplishments of previous sprints.

Testing within each sprint roughly resembles a very short waterfall testing effort, as described in our T&E description earlier. However, for this discussion, we focus on the unique Agile T&E activities that occur outside of each sprint. These activities include requirements verification, trace, and test results assessments.

T&E validates the user stories and associated critical technical parameters against top level requirements, identifying any issues early in the sprint development cycles. As sprints are executed and various levels of story “completion” are achieved, the PBK is updated to re-capture and re-plan those requirements that were not completed during the sprint or that underwent vital user-driven updates. Perturbations to delivered incremental capabilities and changes to go-forward strategies are quite common. These changes are captured by a dynamic T&E planning effort. Finally, because the programs are typically on a rapid 2-week sprint cadence, the T&E engagement and reporting cycles are quite short. This creates additional T&E/developer coordination opportunities, which improves T&E planning timeliness.

At the end of each sprint, the T&E team assess the achieved sprint requirements, now integrated into the evolving target system. Tests are performed for those specific requirements, and regression tests on existing capabilities, with each sprint integration. Successful T&E results drive capability acceptance while T&E failures drive PBK updates and future sprint re-planning efforts.

The key step of an effective T&E effort, which drives product deployment, is the conduct of Operational Test and Evaluation (OT&E). OT&E is a formal test and analysis activity, performed in addition to and largely independent of the DT&E conducted by the development organization. OT&E brings a sharp focus on the probable success of a development article (software, hardware, complex systems) in terms of performing its intended mission once it is fielded. Probable success is evaluated primarily in terms of the “operational effectiveness” and “operational suitability” of the system in question. Operational effectiveness is a quantification of the contribution of the system to mission accomplishment under the intended and actual conditions of employment. Operational suitability is a quantification of system reliability and maintainability, the effort and level of training required to maintain, support and operate the system, and any unique logistics requirements of the system.

While DT&E comprehensively tests to the formal program requirements, OT&E concentrates on assessing the Critical Operational Issues (COI) identified for each program. Measures of Effectiveness (MOEs) are defined (ideally, early in the program life cycle) to support quantitative assessment of the COI. An MOE may reflect test results for one or several key requirements, while some (secondary) requirements may not map into any MOE. Similarly, the OT&E team uses Measures of Suitability (MOSs) to quantify development product performance against the “ilities” relevant to the particular development product. While the DT&E team may give little attention to evaluation of MOEs and MOSs, the OT&E team uses these technical measures extensively to focus their test planning and as a standardized and compact vehicle for communicating their findings to responsible decision authorities.

An OT&E campaign conventionally has two phases: an initial study and preparation phase, followed by a highly structured formal testing phase. Significant OT&E planning, assessment and preparation efforts occur throughout the first phase, which occurs in parallel to the development effort. Independent reports and recommendations are also made to the acquisition authority during this phase. The second phase, in DOD parlance known as Initial OT&E (IOT&E), follows final developer delivery of the target product, but precedes operational deployment. IOT&E is a series of scripted tests conducted on operational hardware, using developer-qualified deliveries, and under test conditions as representative as practical of the expected operational environment. If testing results meet pre-established criteria, IOT&E culminates with a recommendation to certify the development article for operational deployment. Once past the Full Rate Production milestone, Follow-on OT&E (FOT&E) of the development article may occur to verify the operational effectiveness and suitability of the production system, determine whether deficiencies identified during IOT&E have been corrected, and evaluate areas not tested during IOT&E due to system limitations. Additional FOT&E may be conducted over the life of the system to refine doctrine, tactics, techniques, and training programs and to evaluate future increments, modifications, and upgrades.

OT&E independence from the development program (including the program manager and immediate program sponsors) is a key attribute distinguishing it from DT&E. However, use of a common Test and Evaluation Master Plan (TEMP) is typical, and well controlled integrated DT/OT testing (integrated testing) is encouraged.

How GreenDart can help you: We are proven experts in designing and executing operational T&E programs for all hardware and software developmental program efforts. Please contact us.

A key component of an effective T&E effort is Developmental Test and Evaluation (DT&E). DT&E is conducted by the system development organization. DT&E is performed throughout the acquisition and sustainment processes to verify that critical technical parameters have been achieved. DT&E supports the development and demonstration of new materiel or operational capabilities as early as possible in the acquisition life cycle. After the Full Rate Production (FRP) decision or fielding approval, DT&E supports the sustainment of systems to keep them current and extend their useful life, performance envelopes, and/or capabilities. Developmental testing must lead to and support a certification that the system is ready for dedicated operational testing.

DT&E efforts include:

- Assess the technological capabilities of systems or concepts in support of requirements activities;

- Evaluate and apply Modeling and Simulation (M&S) tools and digital system models;

- Identify and help resolve deficiencies as early as possible;

- Verify compliance with specifications, standards, and contracts;

- Characterize system performance, military utility, and verify system safety;

- Quantify contract technical performance and manufacturing quality;

- Ensure fielded systems continue to perform as required in the face of changing operational requirements and threats;

- Ensure all new developments, modifications, and upgrades address operational safety, suitability, and effectiveness;

- During sustainment upgrades, support aging and surveillance programs, value engineering projects, productivity, reliability, availability and maintainability projects, technology insertions, and other modifications.

DT&E is typically conducted to verify and validate developer requirements as for example those specified in documents such as the Software Requirements Specification (SRS), which are derived from customer top-level specifications. The reports and other products of this (i.e., component) level of verification may also serve as required inputs to the system acceptance process.

How GreenDart can help you: We are proven experts in designing and executing T&E programs for all hardware and software developmental program efforts. Please contact us.

Please provide any comments you might have to this post.